Welcome to our new blog! It’s been re-built, re-designed, and moved to a new home on 1Password.com. It’s the fastest and most efficient experience we can give readers and we really love it. Learn how we built it as a static, serverless site with Hugo and AWS.

Our blog has seen many homes over the years, going all the way back to the original one nearly 13 years ago (which is impressively still live on the internet today).

You may have already noticed the new blog you’re reading on now as it’s been around for a couple months, but today, we’re happy to officially announce it! As well as the retirement of our previous one at blog.agilebits.com. Be sure to subscribe via RSS and follow us on Twitter or Facebook to stay up to date with our news, announcements, security tips, and all things 1Password.

The previous blog was built with WordPress, which served us well for the past decade, but we figured we could do better and build something more lightweight, fast, and secure. And of course there’s also new gorgeous design that not only matches the style of our other sites but looks all around amazing. I mean, look at that header artwork at the top of all the posts!

Building a strong foundation with Hugo

We love using static sites for their performance, security, and simplicity. The entire site’s content is created at build time. No server logic, no databases, just plain old HTML files.

We chose Hugo a while ago for our main 1Password.com site, so migrating our blog to it as well was a natural fit. The builds are quick, and it’s easy to use, for both our developers and content writers. Each blog post is simply a Markdown file and it all lives in a GitLab project. Merge requests make for a perfect way to do content reviews.

Hugo is very active project too – we’ve been taking advantage of several of their recently added features like built-in SCSS processing, and asset fingerprinting which is useful for cache busting.

Faster performance ⚡

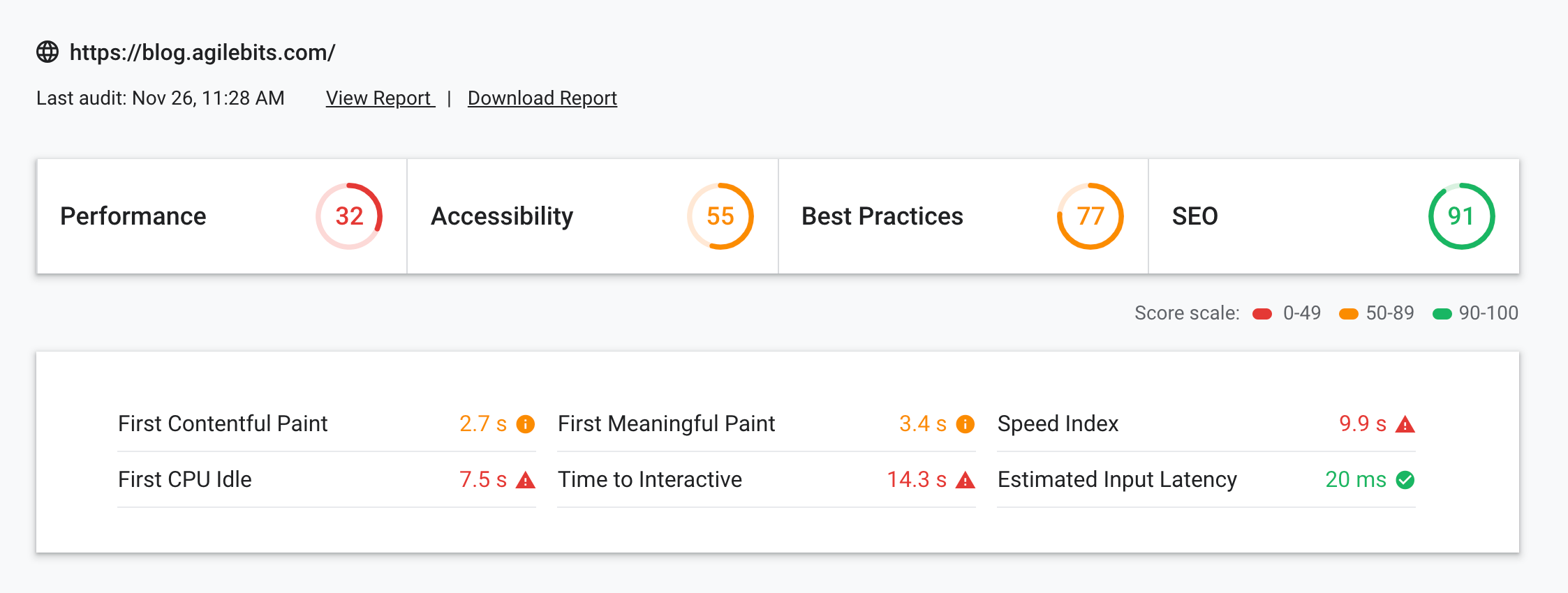

Building something that loads super fast was essential to us. This is an area our previous WordPress blog certainly fell short on:

Having a fully static site without the overhead of dynamically generating pages is a great start. Plus it allows 100% of the content that is loaded to be served by a content delivery network (CDN) which uses local caches near you to minimize network delays.

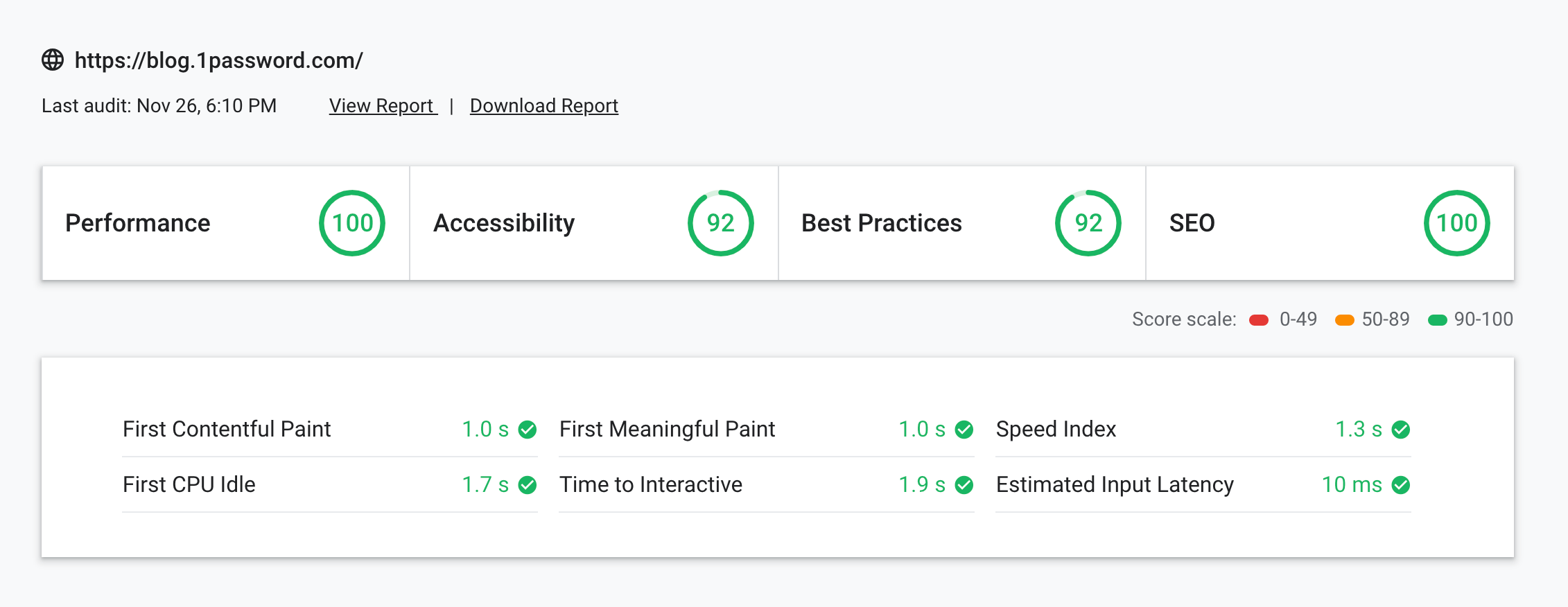

Keeping the page size small is critical as well. Our home page now weighs just 800kb and uses less than 20 network requests, with much better results:

These tests were performed with Google’s new Lighthouse audit tool, which is a great way to see how your site is doing. It points out modern best practices you might not be following, such as image optimzation, and minifying your CSS and JavaScript. If you run a website, I’d highly recommend giving your site a test, you’ll almost certainly learn some good tips.

Stronger Security 🔒

Security is at the top of our minds in everything we do, and our blog is no exception.

Using a first-party solution that’s fully in our control is imperative, in addition to adhering to the same front-end security best practices we use with everything on 1Password.com, such as having a strict Content Security Policy. It’s also extremely important to us for changes be made in a trackable and reviewable manner (with Git), along with having a locked down deployment process.

Going back to the benefits of static sites, there’s a lot less that can go wrong with static HTML files versus a complex platform like Wordpress.

Serverless Infrastructure 🏗

The blog runs on a serverless setup with Amazon Web Services (AWS) taking advantage of several of their services.

It starts with S3, short for Simple Storage Service, which is cloud file storage – this is where all the Hugo generated content lives. It’s a perfect place for storing static resources and is very reliable with almost no downtime.

On top of that is CloudFront, a content delivery network (CDN) that speeds up the serving of our content through a global network of edge locations. When you load the site, you’ll get routed to an edge location near you which provides the lowest delay. It also handles extras like the custom domain, TLS, HTTP/2, and GZIP, all with no additional configuration.

We also use Lambda@Edge, this is a newer AWS service that allows small snippets of code to run at the edge locations in response to CloudFront requests. When we tried to build a similar static site setup a few years ago, we were left wanting a few little features server-side features like the ability to add HTTP headers and perform redirects/rewrites. The introduction of Lambda@Edge has solved that for us, allowing a static, serverless site but still lending a few pieces of functionality that would normally only be available with a traditional server.

In addition to CloudFront providing support for HTTPS, AWS Certificate Manager makes issuing and managing your TLS certificate effortless, and it seamlessly integrates with CloudFront. HTTPS is the future of the web – every site should support it (even your blog). And if you’re using AWS, there’s really no excuse as they make it incredibly easy, and free.

We manage all this with Terraform, which is a tool for writing infrastructure setup as code. We’ve talked previously about how we already use this for our 1Password.com service, I’ll let you check out that post if you’d like to learn more. For this project, Terraform made it simple to create an identical internal testing site for previewing posts before we publish them.

Deployment 🚀

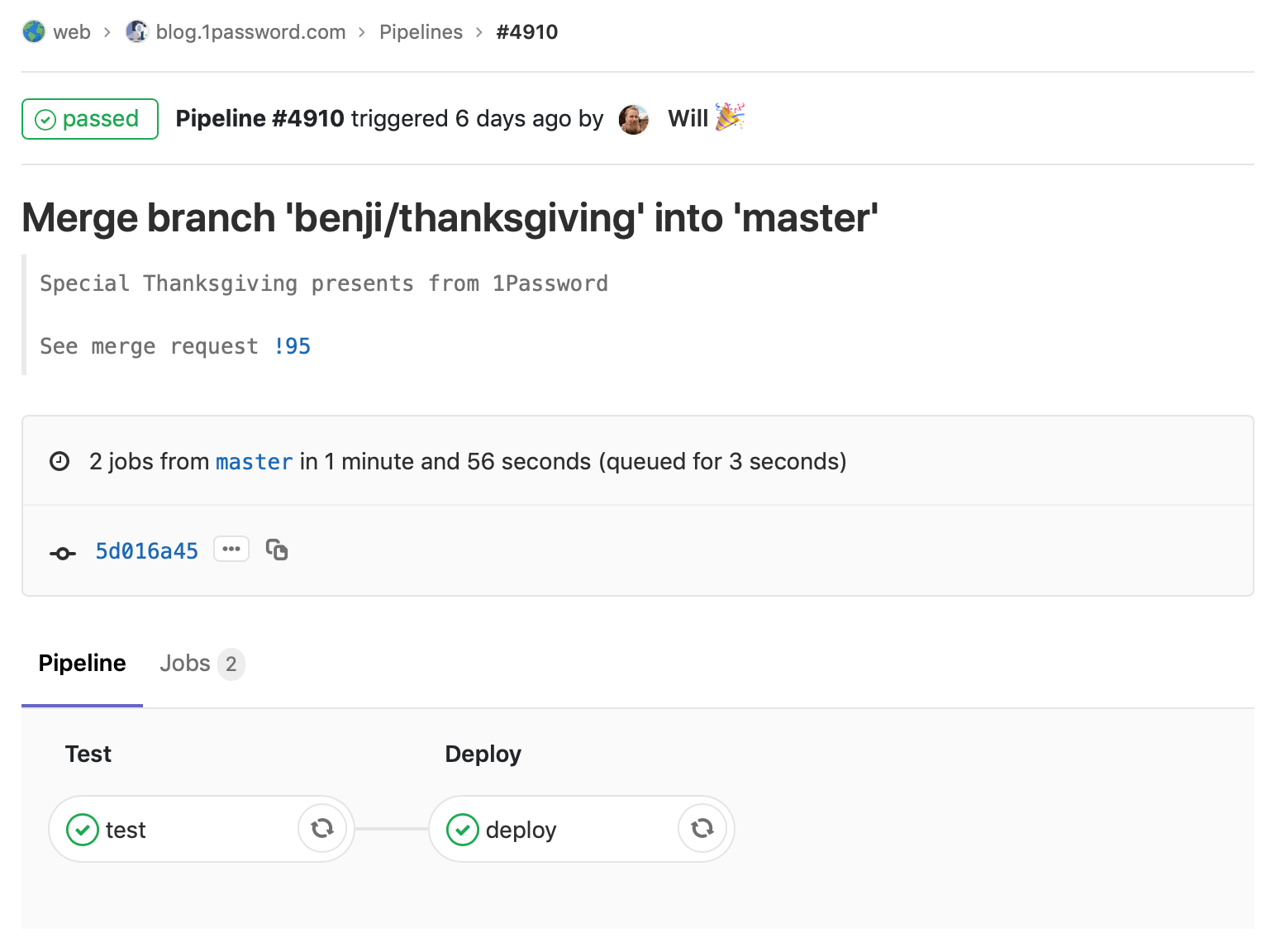

The final step was setting up automatic building and deployment of all this. We decided on GitLab CI, which is built right into GitLab projects.

To run the CI build, you’ll need a Docker container with your dependencies installed, such as Hugo, Terraform, AWS CLI, and Node. The same container also works perfectly for those who want to build it locally, all you need to install is Docker Desktop.

Then it’s as simple as adding a few commands to the GitLab CI config file. From there, it builds the site with Hugo, runs some linters to make sure all content is as good as it can be, syncs the static files to S3, applies Terraform changes if needed, and creates a CloudFront invalidation.

This happens with every commit – it deploys to our internal preview site, and then to production on every merge to master.

Beyond the blog

We’re really happy with how this turned out and are now using this exact setup across many of our web properties, including the main 1Password.com site and even Watchtower. If working on this kind of stuff interests you, we’re currently hiring a front-end web developer.

by Jasper Patterson on

by Jasper Patterson on

Tweet about this post